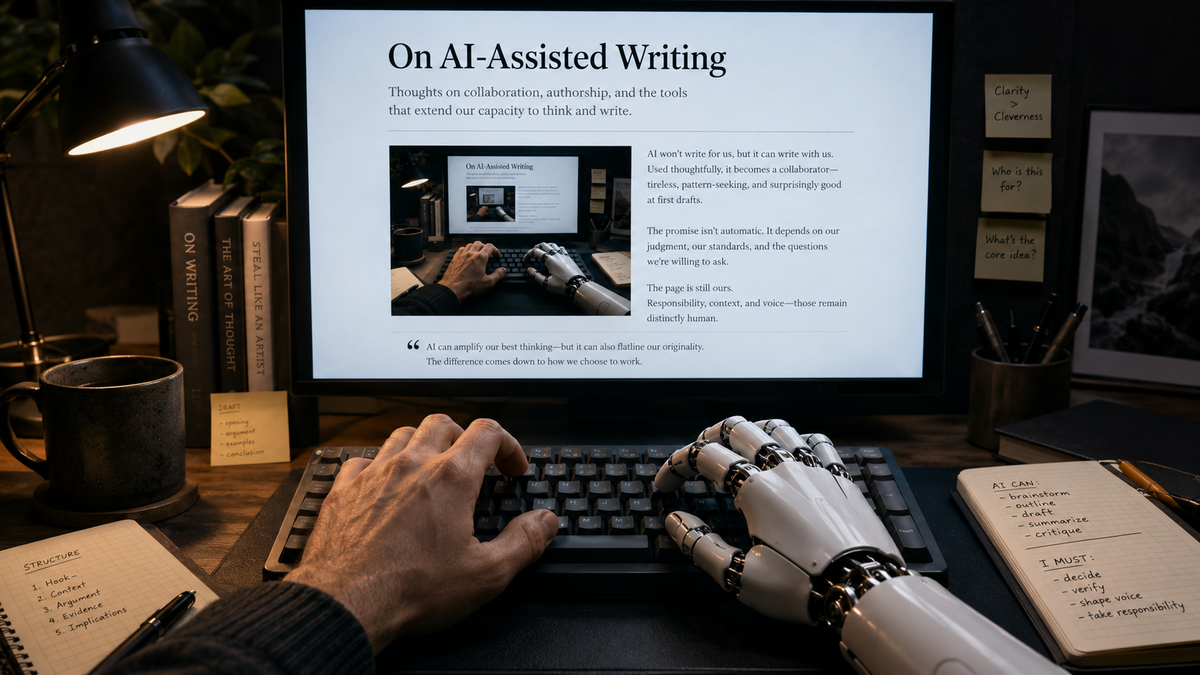

On AI-Assisted Writing

I use AI tools during the creation of my writing. They help me with research, structure, flow, grammar, spelling, and clarity.

The arguments, judgments, conclusions, and final responsibility for the work are my own. AI may assist the process, but it does not decide what I believe, what I publish, or what the piece is trying to convey.

There is a growing discomfort around writing that has been produced with the help of AI. Some of that discomfort is justified. Some of it is misplaced. Most of it, I think, comes from treating AI use as a binary condition.

Either a piece is human writing, or it is AI slop. That binary framing is too simple.

Writing now exists on a spectrum. At one end is a piece researched, written, edited, and published entirely by a human. At the other end is a piece generated entirely by a model, with little or no human judgment involved. Most serious use sits somewhere between those two points. A person may use AI to explore a topic, check grammar, improve flow, challenge an argument, summarize sources, or help structure a draft. None of those uses are identical. They do not carry the same implications, and they should not be treated as if they do.

The term “AI slop” captures something real, but it is too often used as if it settles the matter. It does not. There is a meaningful difference between lazy automated publishing and human work that uses AI as part of the process. The first replaces judgment. The second can support it.

The visceral reaction makes sense when a publisher presents a piece as if it came entirely from their own hand, while quietly outsourcing most of the thinking and writing to a model. The objection is not only about the tool. It is about the implied deception, and the intellectual dishonesty behind it. The reader is being asked to attribute effort, authorship, and judgment to someone who may not have supplied much of any of it.

That is why the reaction feels so close to the reaction people have to plagiarism. It is not exactly the same act, but it touches the same nerve. Someone is taking credit for intellectual work in a way that misrepresents what they actually contributed. There is a kind of chest-puffing in it: look at what I made, look at what I thought, look at what I wrote. If that claim is false, or even materially exaggerated, people are right to feel manipulated.

The distrust does not only come from the reader being duped, it comes from the writer trying to borrow authority they had not earned.

Code seems to provoke a different reaction. AI-assisted programming is already common, and although people debate its risks, the discussion is usually less morally charged than it is with prose. I think part of the reason is that code has to do something. It has to run. It has to satisfy a requirement. Its behavior can be tested, inspected, and verified.

None of this makes AI-generated code safe or good. Quite the opposite. A piece of software can be functionally complete and still be insecure, brittle, unmaintainable, or wrong in ways that only become visible later. “Vibe coding” may produce something that appears to work, but appearance is not correctness. In software, the consequences eventually surface. Bad code creates operational debt. It creates security risk. It fails under pressure. The person who ships it, and the people who depend on it, pay the price.

The “reap what you sow” aspect changes how people perceive AI use in programming. If an experienced developer uses AI to speed up implementation, generate scaffolding, explore alternatives, or catch mistakes, the important question is not whether AI was involved. The important question is whether the developer can evaluate the result. Expertise still matters because the output has to survive contact with reality.

Writing is different.

A written piece does not fail in the same empirical way. It can sound fluent while being hollow. It can appear thoughtful while laundering someone else’s reasoning. It can carry the rhythm of expertise without containing much judgment. A reader can be persuaded that a piece reflects the mind of an individual when, in practice, very little individual thought went into it. This is the harder problem.

I do not think the answer is to pretend AI-assisted writing can be cleanly separated from human writing. It cannot. The boundary is already blurry, and it will only become harder to police as models improve. Detection tools may estimate whether a piece was written with AI, but I do not know how much trust to place in those tools. More importantly, they put the burden on the reader. If disclosure is absent, the reader has to investigate after the fact.

That is backwards.

The publisher knows how the piece was produced. The reader usually does not. Disclosure does not solve every problem, but it gives the reader enough information to make their own decision.

My own position is straightforward. I view AI as a tool in the writing process. I use it to save time, improve clarity, help with structure, catch grammar and spelling issues, and support research. I may use it to test the flow of an argument or identify gaps in a draft.

I do not want AI to decide what I think. I do not want it to replace the act of forming a view. I want it to help me express the view more clearly, check whether the structure holds, and reduce the mechanical drag around writing. That is a different use case from asking a model to generate a finished opinion and publishing it under my name.

At the same time, I do not think every reader will draw the line where I do. Some people will reject AI-assisted writing entirely. That is their right. Others will care only about whether the piece was useful, informative, accurate, or enjoyable. That is also defensible. What matters is that they are not forced to make that decision in the dark.

For me, two questions matter more than the purity of the process: did the piece convey something useful, and was the use of AI disclosed honestly?

The first question matters most. If a piece informs me, clarifies something, makes me think, or gives me a useful frame, I am not especially concerned with quantifying exactly which sentence came from a human and which sentence was shaped by a machine. That line is not only hard to measure; it may be the wrong line to focus on. A human can write useless prose without AI. A model can help produce clear prose under human direction. The presence of the tool does not settle the quality of the work.

The second question matters because it affects trust. Disclosure is not a confession. It is context. It tells the reader how the work was made, and it lets them decide how much that matters to them.

There is an analogy here with wristwatches, though it only goes so far. When quartz watches entered the market, traditional Swiss watchmakers treated them as inferior intrusions into a world built on craftsmanship. Mechanical watches represented precision, beauty, tradition, and human skill. Then cheap quartz watches began to dominate because they told time accurately, required less maintenance, and were accessible to far more people.

The analogy is imperfect. Writing is not a watch, and authorship is not the same thing as timekeeping. But there is a parallel in the reaction. A tool arrives that does some part of the job faster, cheaper, and often well enough for the practical purpose. People who value the older craft react with understandable suspicion. Some of that suspicion protects real values. Some of it becomes denial.

AI-assisted writing is not going away. Humans will use it to draft, edit, research, summarize, polish, and sometimes generate entire pieces. Some of that work will be bad. Some will be dishonest. Some will be useful. Some will be better than what the same person could have produced without the tool.

The reader will have to decide what they accept.

My view is that the obligation of the writer is to be honest about the process, responsible for the claims, and serious about the reasoning. If AI helps with that, I see no reason to pretend otherwise.

And yes, AI did assist me during the writing of this article.